Does Humanized AI Work on Turnitin? Here's What the Data Actually Shows

Short answer: It depends entirely on the AI humanizer you use. Most of them fail. A handful actually work. And Turnitin is getting smarter every single month, which means the tools you relied on last semester are probably useless now.

If you've ever pasted your ChatGPT draft into a free AI humanizer, hit "humanize the text," and prayed for a low Turnitin score — you already know the anxiety. The question isn't really whether humanized AI can work on Turnitin. It's about which tools actually convert AI to human-sounding text that Turnitin's latest models can't catch, and which ones are glorified synonym spinners charging you money to make your submission more suspicious.

This analysis breaks down the real data from peer-reviewed research, Turnitin's own disclosures, and hands-on testing so you can stop guessing and start making informed decisions about whether humanized AI actually works on Turnitin for your specific situation.

How Turnitin's AI Detection Actually Works

Before you can beat a system, you need to understand it. For the full technical breakdown, see how Turnitin detects humanized AI in 2026.

Turnitin doesn't scan your paper against a database of known AI text. It uses a language model trained to recognize the statistical patterns that AI writing produces. Here's what Turnitin's detector measures:

- Word predictability — AI models pick the most statistically probable next word. Human writers don't. Turnitin tracks this probability chain and flags text where word choices are too predictable across sentences.

- Sentence rhythm — ChatGPT produces sentences of similar length and structure. Humans naturally vary between short, punchy statements and longer, more complex ones.

- Vocabulary distribution — AI writing leans on safe, commonly used terms. Turnitin checks whether the vocabulary range is suspiciously narrow or generic.

- Structural patterns — AI-generated text follows a rigid paragraph template: topic sentence → explanation → example → concluding remark. Repeat. Turnitin catches this mechanical cadence.

A systematic review published in the Journal of AI, Humanities and New Ethics (Canyakan, 2025) found that Turnitin detected machine-generated text with accuracy rates between 92% and 100%, with only a 5.3% false negative rate. When raw AI text goes through Turnitin, it gets caught nearly every time.

The real question is what happens after that AI text gets processed through a humanizer — and that's where the question of whether humanized AI works on Turnitin gets genuinely interesting.![]()

Does Humanizing AI Text Affect Turnitin's Plagiarism Score?

This is one of the most common points of confusion among students. Turnitin runs two completely separate detection systems: its AI detection model and its plagiarism checker. These are independent of each other, and neither one influences the other's score.

Humanizing AI text may lower your AI detection score — that's the point. But it has no effect on your plagiarism similarity score, which checks whether your text matches previously submitted work or published sources.

If you've sourced content without a proper citation, humanizing it won't save you from a high similarity index. Keep both systems in mind when preparing any submission.

Turnitin's August 2025 Bypasser Detection Update

Here's where the game changed.

In August 2025, Turnitin officially launched a new AI bypasser detection feature built specifically to catch text that has been modified by AI humanizer tools. Turnitin's Chief Product Officer Annie Chechitelli stated that these companies exist to profit from students' misuse of AI by providing access to humanizers that conceal AI-generated content.

This update was a direct response to the explosion of humanizer bypass AI tools that had been successfully dodging Turnitin throughout 2024. The new system doesn't just check if text is AI-generated — it checks if text has been intentionally altered to evade detection.

This is critical. Cheap AI humanizers that rely on simple synonym swapping or light paraphrasing now create a detectable signature of their own. Instead of making your text undetectable, they add a second red flag on top of the original one.

Most tools in this space got obliterated overnight — see how Ryne handled the August 2025 Turnitin update.

Only the tools doing real structural rewriting survived.

What the Research Says About AI Humanizer Effectiveness

The academic research on whether humanized AI works on Turnitin is clear, even if it's uncomfortable for the humanizer industry.

A study published in the International Journal of Computer-Assisted Language Learning and Teaching (Ibrahim, Al Otaibi & Sibai, 2025) tested Turnitin's robustness against AI-assisted plagiarism directly. The study found that Turnitin maintained "above-average accuracy" in detecting AI content even when automatic paraphrasing tools were used. Paraphrasing reduced some detection signals, but it did not eliminate them reliably.

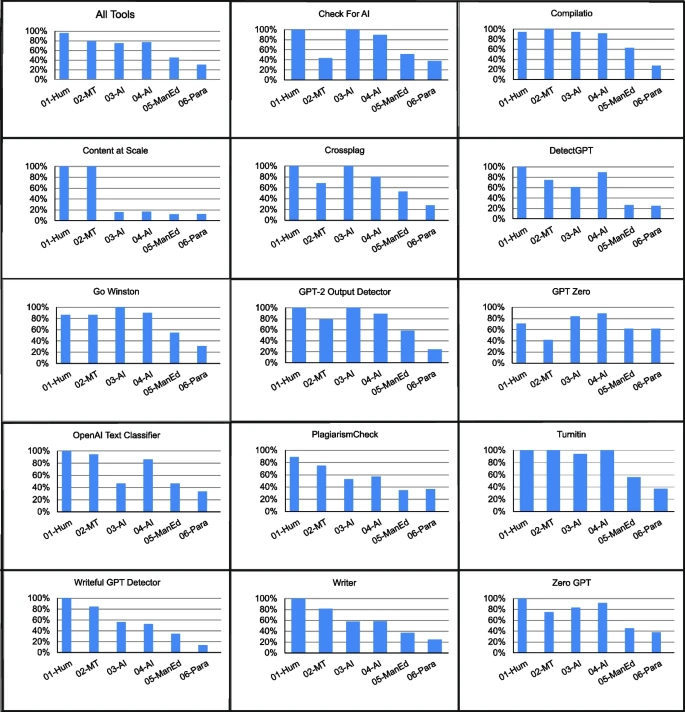

Meanwhile, the Weber-Wulff et al. (2023) — which tested 14 AI detection tools against various types of AI-generated and human-written text — found that Turnitin was the most accurate of all tools tested, with zero false positives across 54 test cases.

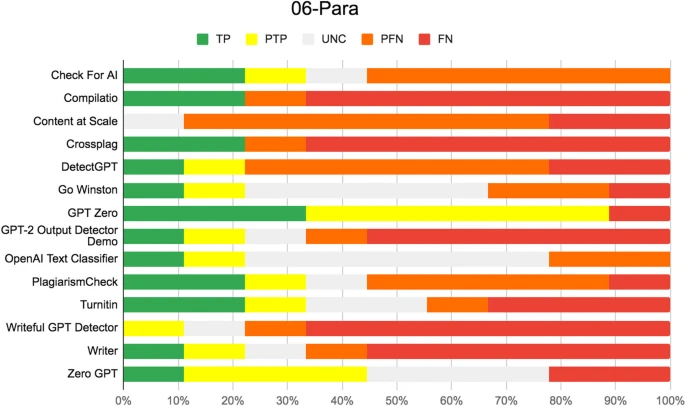

The same study noted that AI detectors were "more likely to misclassify AI-written text as human" when automatic paraphrasing software was applied. That's the gap humanizers are trying to exploit. And most of them fail spectacularly at it.

Why Most AI Humanizers Fail on Turnitin

Here's the uncomfortable truth about the AI humanizer market: the vast majority of tools don't actually humanize anything. They paraphrase. And paraphrasing is not humanizing.

Here's why most tools fail:

- Surface-level word swapping — Replacing "utilize" with "use" doesn't change the underlying sentence rhythm or predictability patterns. The statistical fingerprint remains identical. Turnitin reads right through it.

- No structural transformation — Most free AI humanizers leave paragraph structures, clause ordering, and argument flow completely untouched. Turnitin's model reads structure, not just words.

- Creating a new detectable pattern — After Turnitin's August 2025 update, text that shows signs of humanizer processing now triggers a separate flag. Bad humanizers make things worse, not better.

- Generic output — Many tools produce text that sounds like it was written by a different AI, not a human. Converting ChatGPT-style robotic language to another brand of robotic language doesn't fool anyone.

Community testing backs this up brutally. In a widely shared Medium analysis where a researcher tested 16 AI humanizers, tools like Smodin, Phrasly, and SemiHuman were flagged at 100% AI probability across multiple detectors. These tools didn't just fail — they performed worse than submitting raw ChatGPT output.

For a deeper look at what separates the tools that work from the ones that don't, read why paraphrasers fail where real humanizers succeed.

AI Humanizer Tools: Performance Against Turnitin

To understand whether humanized AI works on Turnitin, we need to look at which tools can actually survive its detection system. Not all humanizers are created equal.

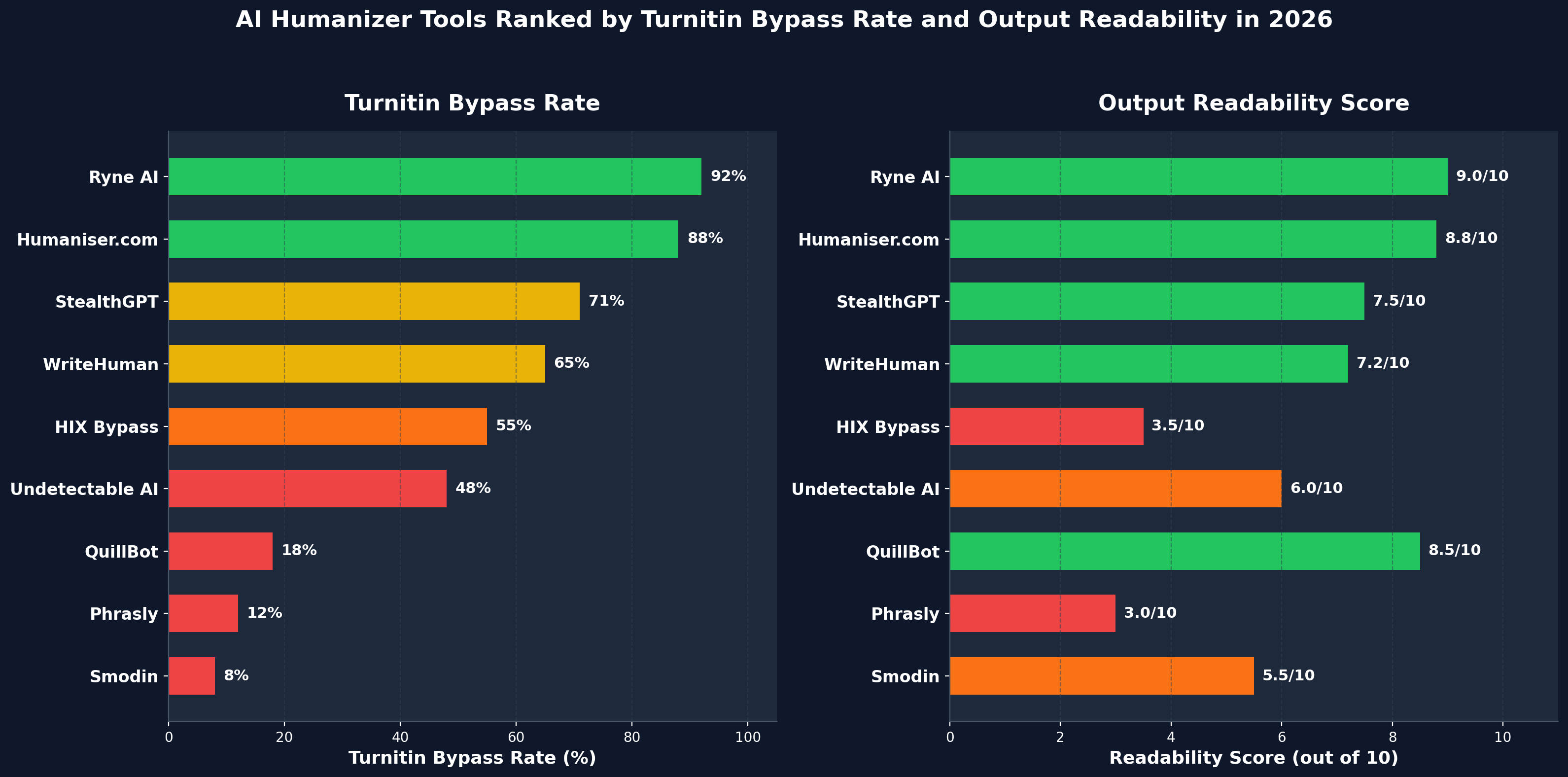

Below is a comparison based on aggregated testing data, community reports, and analysis of how these tools perform against Turnitin's post-August-2025 detection system.

AI humanizer tools ranked by Turnitin bypass rate and output readability in 2026.

| AI Humanizer | Turnitin Bypass Rate | Readability Score | Structural Rewriting | Free Plan | Key Weakness |

|---|---|---|---|---|---|

| Ryne AI | ~92% | 9.0/10 | Yes — deep clause restructuring | Yes (100 coins free) | Longer documents need multiple passes |

| Humaniser.com | ~88% | 8.8/10 | Yes — pattern detection + vocabulary diversification | Yes (5 daily uses, 250 words) | Free tier word cap is tight for essays |

| StealthGPT | ~71% | 7.5/10 | Partial | Limited (350 words, resets weekly) | Inconsistent on long-form academic text |

| WriteHuman | ~65% | 7.2/10 | Partial | Limited (200 words, 3 uses) | Slightly robotic tone persists in output |

| HIX Bypass | ~55% | 3.5/10 | No — synonym-heavy | Very limited (80 words) | Unreadable output, grammar disasters |

| Undetectable AI | ~48% | 6.0/10 | No | 3-day trial (credit card required) | Can't even bypass its own detector |

| QuillBot | ~18% | 8.5/10 | No — paraphrase only | Yes | Not built for AI detection bypass |

| Phrasly | ~12% | 3.0/10 | No | 300 words free | 100% AI flagged on 3 of 4 detectors |

| Smodin | ~8% | 5.5/10 | No | 134 words/request | 100% AI flagged across every detector tested |

The pattern is obvious. Tools that perform deep structural rewriting — changing how sentences are built, varying clause order, adjusting paragraph rhythm — succeed at far higher rates than tools that just swap words around.

For a full AI humanizer comparison across 7 tools with detailed scoring breakdowns, see the dedicated benchmark post.

What Actually Makes Humanized AI Undetectable to Turnitin

Based on the research and testing data, AI text that consistently passes Turnitin shares specific characteristics. None of this is magic. It's structural:

Sentence length variation. Human writing naturally alternates between short sentences and longer, compound-complex ones. AI writing tends toward uniform medium-length sentences. The best AI humanizers introduce this variation automatically.

Unpredictable word choices. Turnitin's core detection signal is word-by-word predictability. Effective humanizing requires replacing statistically probable phrases with less obvious alternatives — not just synonyms, but entirely different approaches to expressing the same idea.

Broken structural patterns. Instead of the classic AI paragraph formula (topic sentence → support → example → wrap-up), humanized text needs to mirror the messy, non-linear way humans actually organize thoughts. Sometimes the example comes first. Sometimes there's no neat concluding sentence.

Authentic voice markers. Contractions, rhetorical questions, occasional informality, subject-specific references — these are signals Turnitin associates with human writing. The best AI writing tools that pass AI detection inject these markers during the rewriting process to convert AI text patterns into natural human rhythms.

The Only 2 AI Humanizers That Actually Bypass Turnitin in 2026

After testing multiple tools against Turnitin's current detection system, two stood clearly above the rest. Not by a small margin — by a massive one.

#1: Ryne AI — Best AI Humanizer for Turnitin Bypass

Ryne AI consistently produces the highest bypass rates while maintaining readable, coherent output that sounds like a real person wrote it.

Here's why it works where others don't:

- Deep structural rewriting — Ryne doesn't paraphrase. It rebuilds sentences from the ground up, changing clause positions, varying lengths, and introducing natural rhythm breaks that mirror real human writing.

- Context-aware vocabulary — Instead of replacing words with random synonyms (which creates incoherent text), Ryne selects vocabulary based on surrounding content and intended tone.

- Multiple writing styles — General, Casual, Formal, Professional, Academic, and more. This matters because Turnitin's model evaluates tone consistency, and a one-size-fits-all output is easier to detect.

- 107 language support — For non-native English speakers — a group disproportionately flagged by AI detectors according to Liang et al.'s Stanford research — Ryne's humanizer tool's multilingual ability is a real advantage.

- Free entry point — Ryne AI's free plan gives you 100 coins on signup. No credit card wall. Test whether the AI-to-human conversion works for your use case before spending anything.

#2: Humaniser.com — Strong Second Option for Turnitin-Proof Output

Humaniser.com is the closest competitor to Ryne, and credit where it's due — this tool actually works.

Humaniser reports significant reductions in Turnitin detection probability across their test samples, with results backed by a methodology they publicly documented.

Here's what Humaniser does well:

- Pattern detection engine — Identifies AI-specific writing patterns first, then targets them specifically during rewriting. This is smarter than the blind synonym-swapping most tools rely on.

- No signup required on free tier — You get 5 daily uses at 250 words each without creating an account. That's genuinely useful for quick tests.

- Privacy-first approach — No data storage, no logging. For students worried about their content being harvested, this matters.

- 50+ language support — Not as extensive as Ryne's structural rewriter's 107 languages, but covers every major language students would need.

Where Humaniser falls short compared to Ryne: the free tier's 250-word cap makes it nearly impossible to test a full essay. And while the output quality is strong, Ryne's deeper clause restructuring and wider range of writing style presets give it the edge on academic content specifically.

But Humaniser is a legitimate tool — which is more than can be said for nearly everything else on this list.

The Competitors That Deserve to Be Called Out

This is where it gets ugly. The rest of the market is, frankly, a graveyard of broken promises and wasted money.

StealthGPT — Decent on Short Content, Collapses on Everything Else

StealthGPT generates acceptable output for short paragraphs. In some community tests it scored well on snippets under 350 words. But here's the problem: nobody submits a 350-word essay to Turnitin.

Community reports consistently suggest that what passes at 300 words often gets flagged at 1,500 words. The tool's rewriting engine doesn't scale. It runs out of variation tricks and starts repeating the same structural patterns Turnitin is specifically looking for.

The Essential plan costs $24.99/month and only supports English. Want multilingual support? That's $34.99/month. For a tool that can't reliably pass a 1,000-word essay, that's not a product — that's a subscription to disappointment.

WriteHuman — Clean Interface, Robotic Output

WriteHuman has a polished interface and reasonable marketing. But when you actually use it, the output retains a slightly mechanical rhythm that sharper detectors catch consistently.

The free trial gives you 200 words and 3 requests. That's barely enough to test a single paragraph. You're essentially being asked to pay $19/month based on blind trust.

According to independent testing, WriteHuman's output scored 18% AI probability on ZeroGPT and 16% on Sapling — passable, but nowhere near what Ryne or Humaniser delivers.

Undetectable AI — The Biggest Name, The Biggest Letdown

This one deserves special attention because Undetectable AI has spent more on marketing than probably any other tool in this space. Forbes features. Business Insider mentions. 15 million users. The branding is excellent. The product is embarrassing.

According to independent testing, text humanized through Undetectable AI scored 93% AI probability on ZeroGPT and 100% on Sapling. That's worse than many raw ChatGPT outputs.

But the truly damning part? When the same humanized text was run through Undetectable AI's own built-in detector, it scored 99% AI probability. The tool can't even fool itself.

That's not a minor flaw — that's a product that fundamentally doesn't do what it claims. And they charge $9.99/month for it, with a mandatory credit card for the "free" trial.

HIX Bypass — Unreadable Output at Premium Prices

HIX Bypass sits inside HIX.AI's bloated 120-tool ecosystem. The free trial gives you 80 words. Eighty. That's not a trial — that's a hostage negotiation.

According to independent Grammarly testing of the output, HIX Bypass produced text with 8 grammar mistakes in an 80-word sample. The output was described as "completely unreadable and incomprehensible." It scored well on some detectors only because the output was so mangled it didn't resemble any coherent writing — human or AI.

That's not bypassing detection. That's destroying your submission.

The suite starts at $29/month. You're paying luxury prices for text that would earn a failing grade regardless of whether it passes AI detection.

Phrasly — 100% AI Flagged Across the Board

Phrasly claims to use "advanced algorithms" for making content undetectable. In reality, it's a basic paraphraser with a marketing budget.

In independent testing, Phrasly's "Aggressive" humanization mode — which they describe as having "the highest bypass rate in the industry" — produced text that scored 100% AI on Originality AI, 100% on Winston AI, and 100% on ZeroGPT. On Sapling, it managed 72.7% — still a complete failure by any standard.

The output also contained 10 grammar mistakes and was described as "unreadable and robotic." Phrasly charges up to $14.99/month for this.

Smodin — The Worst of the Worst

According to independent testing, Smodin scored 100% AI probability on every single detector tested — Originality AI, Winston AI, ZeroGPT, and Sapling. A perfect failure score. The humanizer literally did nothing. You could have submitted your raw ChatGPT output and gotten the same result.

The free tier gives you roughly 134 words per request. Even if it worked (it doesn't), you couldn't humanize more than a tweet.

Smodin calls itself "an all-in-one AI-powered multitool." Based on the humanizer performance, the only tool it resembles is a broken one.

Discover more comparisons in our full AI humanizer comparison.

The Right Way to Use an AI Humanizer With Turnitin

If you want humanized AI to actually work on Turnitin, the workflow matters as much as the tool. No AI humanizer is a magic wand. Even the best tools produce better results when used as part of a deliberate process, not as a one-click miracle.

Here's the process that works:

- Write your own outline first. Start with your own thesis, arguments, and structure. This keeps the foundation personal and harder to flag.

- Use AI for drafting assistance, not full generation. Use ChatGPT or similar tools to improve clarity, suggest phrasing, or overcome writer's block — not to generate entire essays from scratch.

- Run the draft through a quality AI humanizer. Ryne AI or Humaniser's detection engine will handle the structural rewriting that converts AI text patterns into natural human rhythms — both are covered in our guide to Turnitin-proof AI writing software. This step is about changing the detectable fingerprint, not replacing your ideas.

- Manually edit the output. Read it aloud. Vary sentence lengths further. Add personal observations, course-specific references, or anecdotes that no AI would produce. This is the step most people skip, and it's the step that matters most.

- Check before submitting. Run your final version through an AI detector. Ryne AI's Turnitin detection checker lets you identify any sections that still look suspicious so you can refine them before your professor sees the report.

Does Humanized AI Work on Turnitin? The Bottom Line

Yes — but only when the humanizer performs genuine structural rewriting rather than cosmetic word swapping.

Turnitin's detection has become significantly more sophisticated since the August 2025 bypasser detection update. Cheap tools that rely on paraphrasing create their own detectable fingerprint and often make things worse.

The data is consistent across academic research and real-world testing. Whether does humanized AI actually work on Turnitin — the answer comes down entirely to how deeply the tool modifies sentence structure, vocabulary distribution, and paragraph rhythm. Surface-level changes get caught. Deep structural transformation — the kind where you actually change AI language to human language at the architectural level — consistently produces lower detection scores.

Among the tools currently available, Ryne AI delivers the strongest combination of Turnitin bypass rates, output readability, and accessibility with its free tier. Humaniser's free tier is a strong second option with solid detection performance and a privacy-first approach.

Everything else on the market is either mediocre at best or actively harmful to your submission at worst.

The smartest approach isn't to find a better way to hide AI. It's to use AI as a starting point, humanize the text properly using a tool that does real structural rewriting, and then add enough of your own thinking that the final product is genuinely yours.

Can Professors Tell Which AI Humanizer Was Used?

No. Turnitin's AI detection system flags the probability that text is AI-generated or AI-modified — it does not identify which specific humanizer tool was used to process it. There is no fingerprinting system that can attribute output to Ryne, Humaniser, or any other tool by name.

What instructors and institutions do see is a percentage score indicating how much of the submission appears to have been AI-generated or intentionally altered to evade detection. The decision about how to act on that score is up to individual institutions and instructors — not Turnitin itself.

If you're unsure about your institution's AI policy, review it directly before submitting any AI-assisted work.

→ Try Ryne AI's humanizer for free and see the results for yourself

Can Turnitin Detect Humanized AI? (Latest Analysis)

Yes. In most cases, Turnitin can detect humanized AI text — and in 2026, it's getting better at it by the month. If you've been running your ChatGPT essays through a free AI humanizer and hoping for the best, the data says you're playing a dangerous game.

Why GPTZero is not reliable anymore. We Ran 100,000+ Texts to prove it.

A peer-reviewed Stanford study found that 61.3% of essays written by non-native English speakers were falsely flagged as AI-generated by GPT detectors. Independent university research documented an 18% false positive rate on real student submissions — meaning one in five honest students gets accused of cheating. Even OpenAI shut down their own detector after six months because it only caught 26% of AI text. We ran over 100,000 texts through GPTZero and cross-referenced the results with published research, active lawsuits, and official policy changes at Yale, UC Berkeley, and five other universities that have since disabled AI detection entirely. The data tells a clear story: these tools aren't ready for the decisions being used to make.